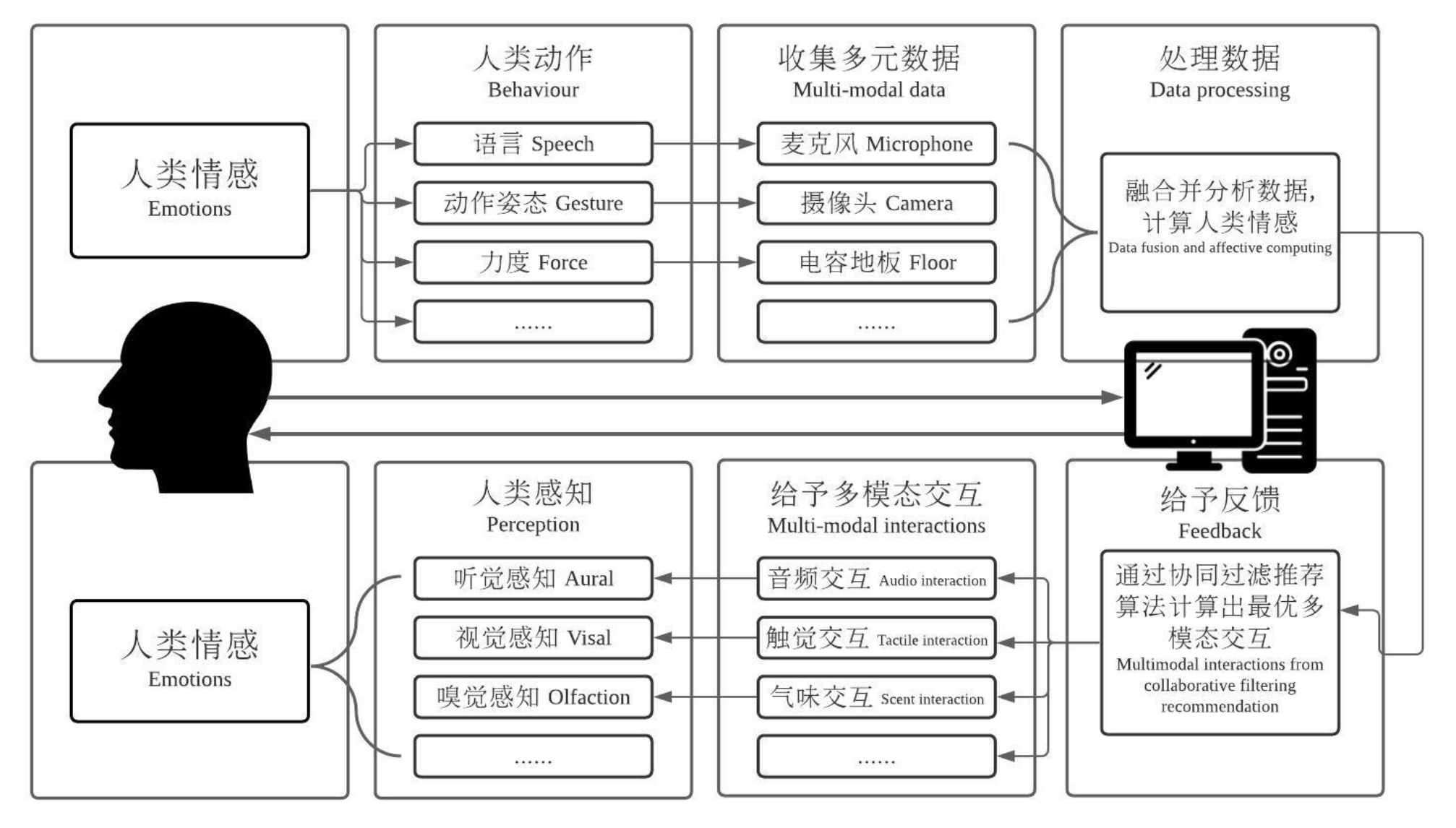

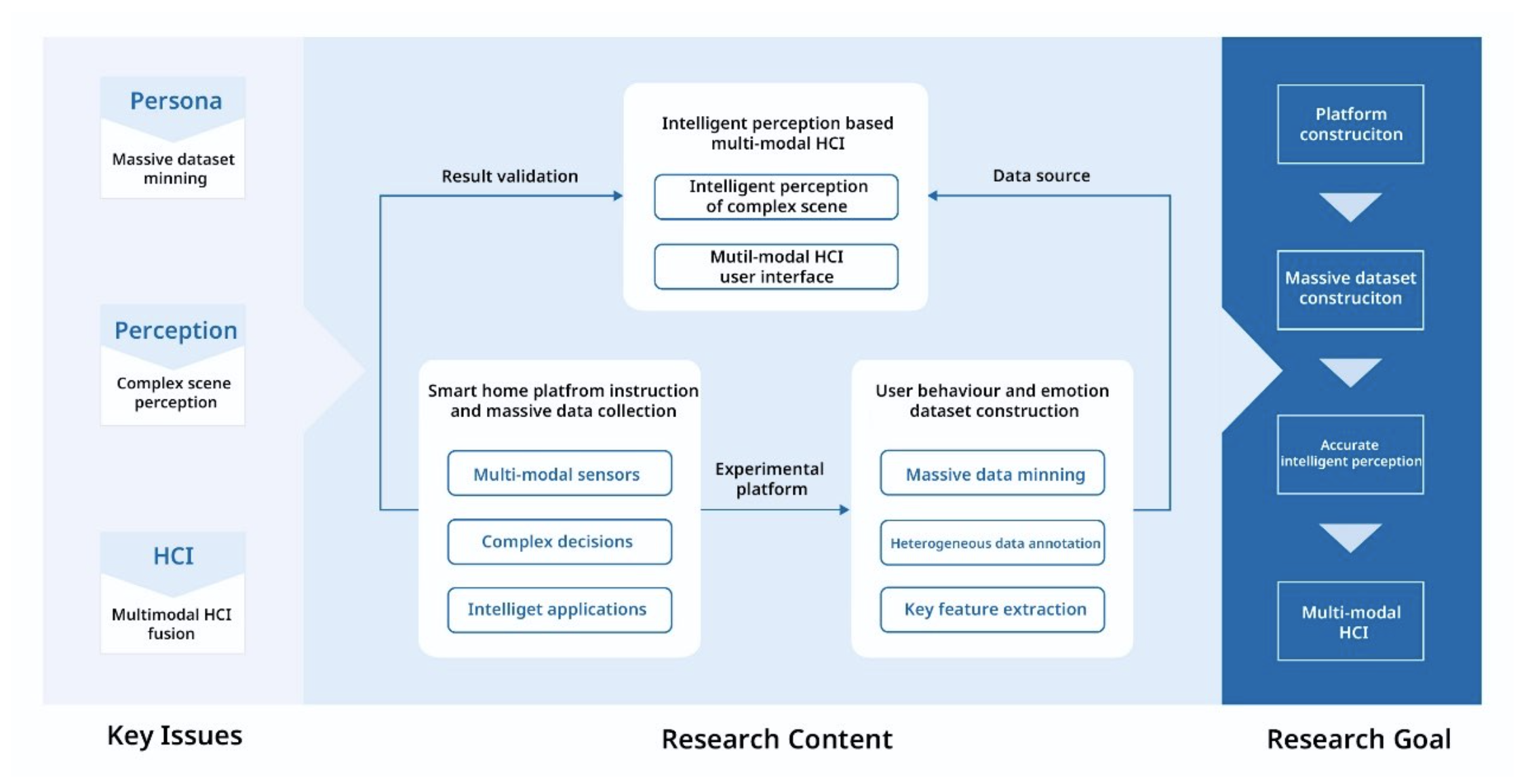

Humans inhabit a number of physical spaces everyday, such as the home, office, and other locations. The possibility for human-computer interaction within these spaces is broad, and so contains wide opportunities for research and optimization, including for devices like smart home appliances, smart speakers, and smart toys. In recent years, affective computing has emerged as a growing field of research direction. . It studies the interaction between computing and human affects, and can help produce an intelligent living environment. This project plans to study the theory and method of multimodal affective computing and its application in a human habitat. Using video, voice and other input channels, we obtain the facial expressions, body postures, speech semantics, and other characteristics of humans in a habitat. classified and annotated into a database of human affects, which can open new research explorations in natural human-computer interaction applications in an intelligent human habitat.

Under hardware environment construction

In-depth user study and portrait

Establishing dynamic information and multimoding interaction coupling model